- #246

256bits

Gold Member

- 3,900

- 1,945

Comparing apples to oranges.

According to Virginia tech, self driving cars crash rate is less than national average, per miles driven.

https://www.vtti.vt.edu/featured/?p=422

According to Virginia tech, self driving cars crash rate is less than national average, per miles driven.

https://www.vtti.vt.edu/featured/?p=422

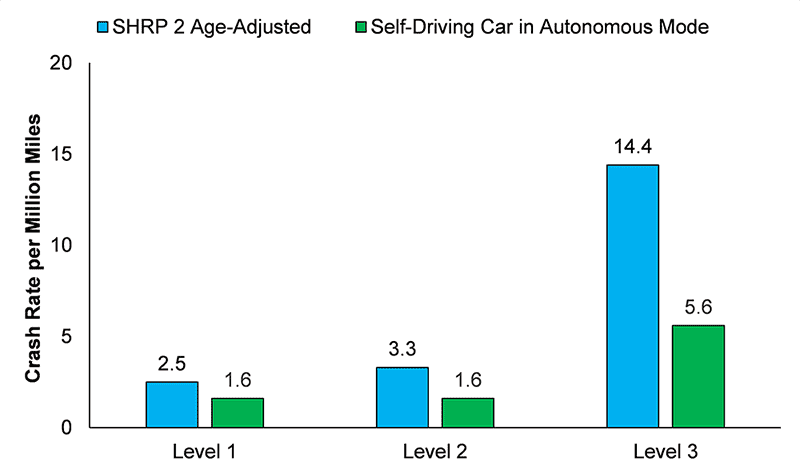

When compared to national crash rate estimates that control for unreported crashes (4.2 per million miles), the crash rates for the Self-Driving Car operating in autonomous mode when adjusted for crash severity (3.2 per million miles; Level 1 and Level 2 crashes) are lower. These findings reverse an initial assumption that the national crash rate (1.9 per million miles) would be lower than the Self-Driving Car crash rate in autonomous mode (8.7 per million miles) as they do not control for severity of crash or reporting requirements. Additionally, the observed crash rates in the SHRP 2 NDS, at all levels of severity, were higher than the Self-Driving Car rates. Estimated crash rates from SHRP 2 (age-adjusted) and Self-Driving Car are displayed in Figure 1.