- #1

- 7,422

- 3,122

I created this thread because every online resource I have examined to date has been largely worthless- either totally over-engineered complexity or superficial garbage (can you tell I am irritated?). The problem is simple: optimize DSS image stacking and post processing based on quantitative image data. The essential metrics are 1) signal-to-noise ratio and 2) dynamic range.

Here’s the basic scenario: I am in a light-polluted urban environment. I image at low f-number, so fall-off (image non-uniformity) is a significant issue. My primary goal of image stacking (for me) is to completely remove the sky background across the entire field of view and to compress the dynamic range of the field of view to 'amplify' faint objects with respect to bright stars.

Let’s start with single frames- this already introduces potential confusion. I acquire frames in a 14-bit RAW format, but I have no way of directly accessing that data. So, for this thread, I used Nikon’s software to convert a single channel 14-bit RAW image into a 3-channel 16-bit TIF (RGB format). Most likely, this is done by averaging 4 neighboring same-color pixels in the RAW data to generate a single TIF pixel (14 bits + 2 bits = 16 bits). Here are two images, one taken at f/4 of the Pleiades, and the other taken at f/2.8 of the flame and horsehead nebulae:

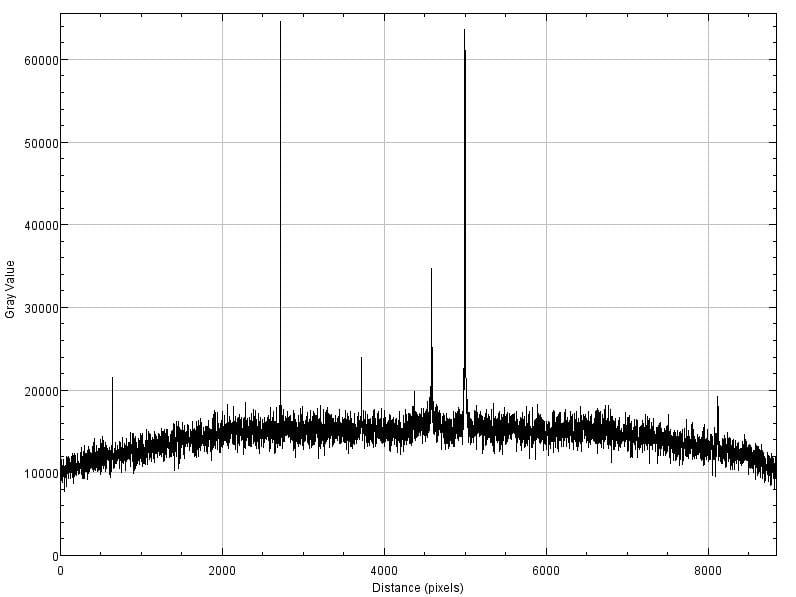

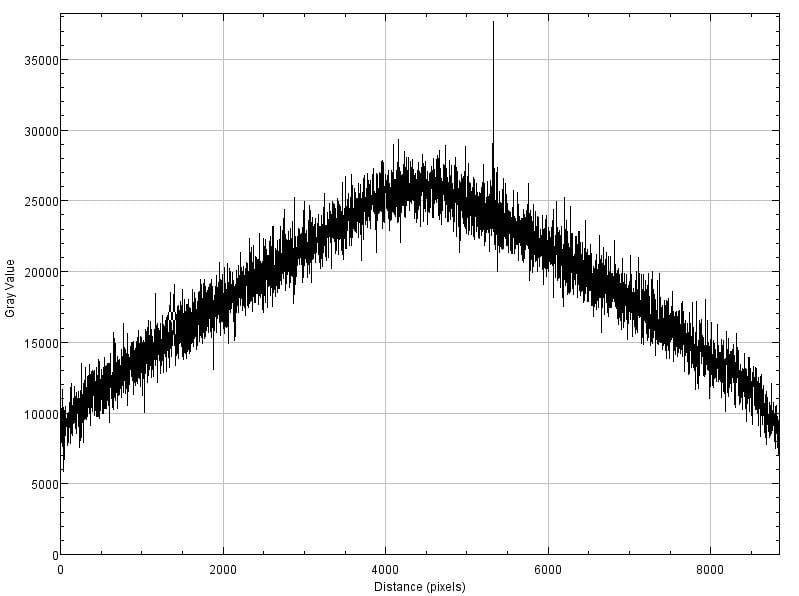

From these, I use ImageJ (now called Fiji) to extract quantitative data. First, I’ll provide a ‘linescan’ from the upper left corner to the lower right corner for each image. This graph returns the greyscale value at each pixel along the line:

There are three basic results here. First, as you can see, the falloff is especially significant at f/2.8. Second, you can see the effect of noise (both images were acquired at ISO 1000), and third, you can see tall spikes where the scan line happens to intersect a star.

Next, I can use ImageJ to determine a signal-to-noise ratio (SNR) by selecting a small star-fee region in the image and computing the greyscale mean and standard deviation; I measured this in the center of the frame and also near a corner of the frame (I apologize for the non-tabular formatting)

Image center: corner:

pleiades 15640 +/- 1317 13239 +/- 1203

horsehead 26004 +/- 1285 12721 +/- 1188

This brings up a question: I measured the SNR in terms of greyscale values, not in terms of bits or dB. I can convert the greyscale to dB easily enough, but what will make more sense is to think in terms of bit depth. I’m honestly not sure how to interpret my values in terms of that- it seems that I have about 11 bits of noise, so my 16-bit image has a dynamic range of only 5 bits (or 5 f-stops or 3.7 stellar magnitudes)? That doesn’t make sense. Help?

Here’s why I care about that question: dynamic range is what I need to maximize in order to separate faint objects from the sky background. Certainly, noise reduction is important, but as you will see, I also need to boost the dynamic range- and post-processing after stacking does this.

I’ll stop here for now: I’ve established some basic image metrics and determined them for 2 sample images. Moving forward, I have to rely on linescans to illustrate the process: there’s no point to posting the images.

Here’s the basic scenario: I am in a light-polluted urban environment. I image at low f-number, so fall-off (image non-uniformity) is a significant issue. My primary goal of image stacking (for me) is to completely remove the sky background across the entire field of view and to compress the dynamic range of the field of view to 'amplify' faint objects with respect to bright stars.

Let’s start with single frames- this already introduces potential confusion. I acquire frames in a 14-bit RAW format, but I have no way of directly accessing that data. So, for this thread, I used Nikon’s software to convert a single channel 14-bit RAW image into a 3-channel 16-bit TIF (RGB format). Most likely, this is done by averaging 4 neighboring same-color pixels in the RAW data to generate a single TIF pixel (14 bits + 2 bits = 16 bits). Here are two images, one taken at f/4 of the Pleiades, and the other taken at f/2.8 of the flame and horsehead nebulae:

From these, I use ImageJ (now called Fiji) to extract quantitative data. First, I’ll provide a ‘linescan’ from the upper left corner to the lower right corner for each image. This graph returns the greyscale value at each pixel along the line:

There are three basic results here. First, as you can see, the falloff is especially significant at f/2.8. Second, you can see the effect of noise (both images were acquired at ISO 1000), and third, you can see tall spikes where the scan line happens to intersect a star.

Next, I can use ImageJ to determine a signal-to-noise ratio (SNR) by selecting a small star-fee region in the image and computing the greyscale mean and standard deviation; I measured this in the center of the frame and also near a corner of the frame (I apologize for the non-tabular formatting)

Image center: corner:

pleiades 15640 +/- 1317 13239 +/- 1203

horsehead 26004 +/- 1285 12721 +/- 1188

This brings up a question: I measured the SNR in terms of greyscale values, not in terms of bits or dB. I can convert the greyscale to dB easily enough, but what will make more sense is to think in terms of bit depth. I’m honestly not sure how to interpret my values in terms of that- it seems that I have about 11 bits of noise, so my 16-bit image has a dynamic range of only 5 bits (or 5 f-stops or 3.7 stellar magnitudes)? That doesn’t make sense. Help?

Here’s why I care about that question: dynamic range is what I need to maximize in order to separate faint objects from the sky background. Certainly, noise reduction is important, but as you will see, I also need to boost the dynamic range- and post-processing after stacking does this.

I’ll stop here for now: I’ve established some basic image metrics and determined them for 2 sample images. Moving forward, I have to rely on linescans to illustrate the process: there’s no point to posting the images.