- #1

MichPod

- 228

- 45

The following is kind of a semiclassical reasoning which goes along the style of the discussions between Bohr and Einstein in Solvay Conference.

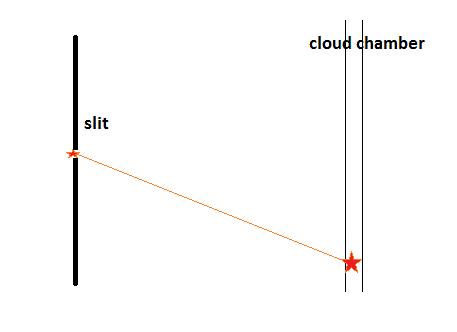

Suppose we have a single slit from which a particle may be emitted and, on a very large distance from it, a thin cloud chamber CC, which we use as a kind of a screen to detect the particle.

Also suppose that we know the moment at which the particle passed via the slit (we can put some light source to fix that moment or we can use another small cloud area put at the slit so we could see a small bubble in the area of the slit when the particle passes).

As the particle travels from the slit to the CC, there is no force applied to it so it goes on the straight line with a constant speed and finally creates some bubble at the CC (the Cloud Chamber). We can calculate the speed of the particle as the distance from the slit to the point where the particle was detected at CC divided by the time between these two detections. By making the distance between the slit and the cloud chamber CC very big, we can reduce to any necessary minimum the contribution of all the uncertainties involved into the measurement of the speed as the size of the slit at which the first detection of the particle done, the size of the first bubble in CC at which the second detection done, the time measurement errors. Because we can reduce the error of the speed measurement to any minimum, so we can reduce to any minimum the momentum measurement. And the error in the coordinate measurement is determined by the size of the bubble it the CC. So in the end the product of the coordinate error and momentum error at the point at which the particle was detected at CC may be made (as it looks) arbitrary small which violates the Heisenberg uncertainty principle.

Could you help me to see where is the error in this reasoning?

Suppose we have a single slit from which a particle may be emitted and, on a very large distance from it, a thin cloud chamber CC, which we use as a kind of a screen to detect the particle.

Also suppose that we know the moment at which the particle passed via the slit (we can put some light source to fix that moment or we can use another small cloud area put at the slit so we could see a small bubble in the area of the slit when the particle passes).

As the particle travels from the slit to the CC, there is no force applied to it so it goes on the straight line with a constant speed and finally creates some bubble at the CC (the Cloud Chamber). We can calculate the speed of the particle as the distance from the slit to the point where the particle was detected at CC divided by the time between these two detections. By making the distance between the slit and the cloud chamber CC very big, we can reduce to any necessary minimum the contribution of all the uncertainties involved into the measurement of the speed as the size of the slit at which the first detection of the particle done, the size of the first bubble in CC at which the second detection done, the time measurement errors. Because we can reduce the error of the speed measurement to any minimum, so we can reduce to any minimum the momentum measurement. And the error in the coordinate measurement is determined by the size of the bubble it the CC. So in the end the product of the coordinate error and momentum error at the point at which the particle was detected at CC may be made (as it looks) arbitrary small which violates the Heisenberg uncertainty principle.

Could you help me to see where is the error in this reasoning?

Attachments

Last edited: