- #1

SleepyFace-_-

- 4

- 0

Hi Guys, fresh meat here.

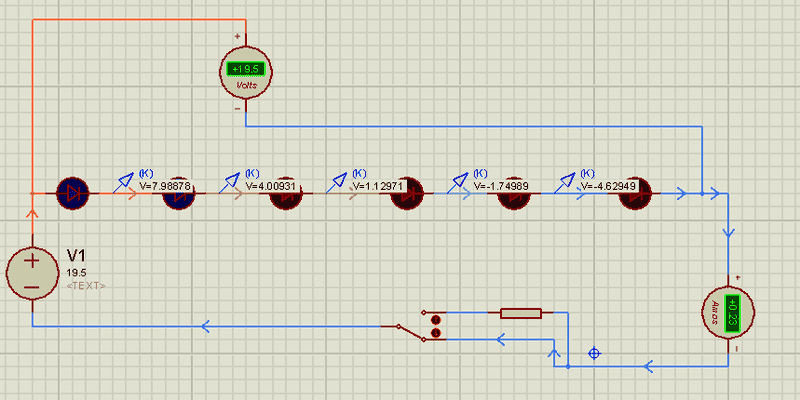

I have a simple LED series circuit I'm currently playing with. 700ma forward voltage leds at 3.3v (blue) and 2.2v (red).

Its working fine, I have it rigged to a 19.5V power supply and a 2.7 Ohm, 25 W resistor.

I need 15.4 out of the 19.5, meaning I have to drop 4.1v @ 4.2 A. I should be using a 1 ohm resistor, but all I had even close in the wattage dept. was the 2.7.

In my effort to increase my exp points while I wait for more components, I decided to start messing with circuit simulations so I create the same circuit, with no resistor, and start getting readings...The results seriously messed with the thin layer of understanding I though I had:

The leds are set to the correct voltages and draws, yet I'm seeing a .23 amp reading on the ammeter.

I have a feeling I am missing something here...I tried using both a DC voltage source and a battery to the same effect...Can anyone shed some light on this?

Thanks for your help!

I have a simple LED series circuit I'm currently playing with. 700ma forward voltage leds at 3.3v (blue) and 2.2v (red).

Its working fine, I have it rigged to a 19.5V power supply and a 2.7 Ohm, 25 W resistor.

I need 15.4 out of the 19.5, meaning I have to drop 4.1v @ 4.2 A. I should be using a 1 ohm resistor, but all I had even close in the wattage dept. was the 2.7.

In my effort to increase my exp points while I wait for more components, I decided to start messing with circuit simulations so I create the same circuit, with no resistor, and start getting readings...The results seriously messed with the thin layer of understanding I though I had:

The leds are set to the correct voltages and draws, yet I'm seeing a .23 amp reading on the ammeter.

I have a feeling I am missing something here...I tried using both a DC voltage source and a battery to the same effect...Can anyone shed some light on this?

Thanks for your help!